Difference between revisions of "IoT Data Ingestion"

Allen.chao (talk | contribs) (add azure stream analytics) |

Allen.chao (talk | contribs) (add azure stream analytics input) |

||

| Line 5: | Line 5: | ||

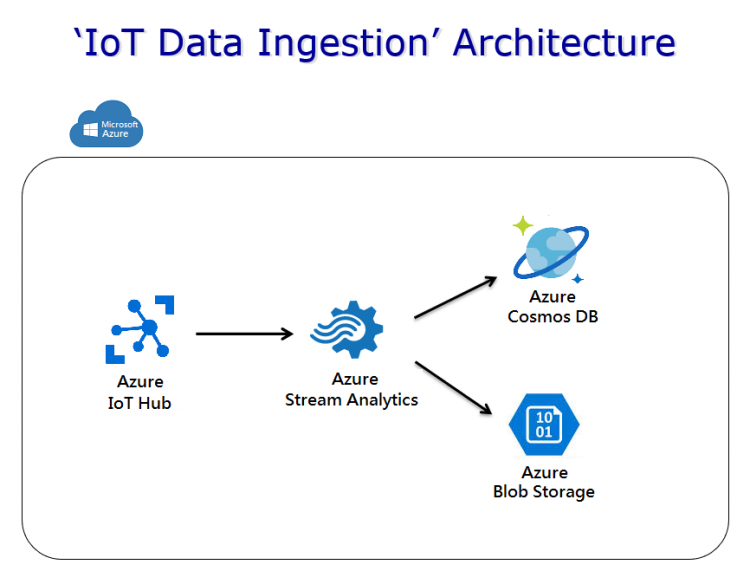

When an Azure IoT hub receives messages, we use Azure stream analytics job to dispatch the received data to other Azure services. In this case, we will ingest data into Azure CosmosDB and Azure blob storage. | When an Azure IoT hub receives messages, we use Azure stream analytics job to dispatch the received data to other Azure services. In this case, we will ingest data into Azure CosmosDB and Azure blob storage. | ||

| − | The IoT hub we're using to receive messages is named '''"azurestart-hub"'''. All Azure resources used in this section are stored in single Azure resource group named '''"azurestart-rg"'''. | + | The IoT hub we're using to receive messages is named '''"azurestart-hub"'''. All Azure resources used in this section are stored in single Azure resource group named '''"azurestart-rg"'''. |

[[File:IoTDataIngestion-az.png|center|750px|iot data ingest flow]] | [[File:IoTDataIngestion-az.png|center|750px|iot data ingest flow]] | ||

| Line 15: | Line 15: | ||

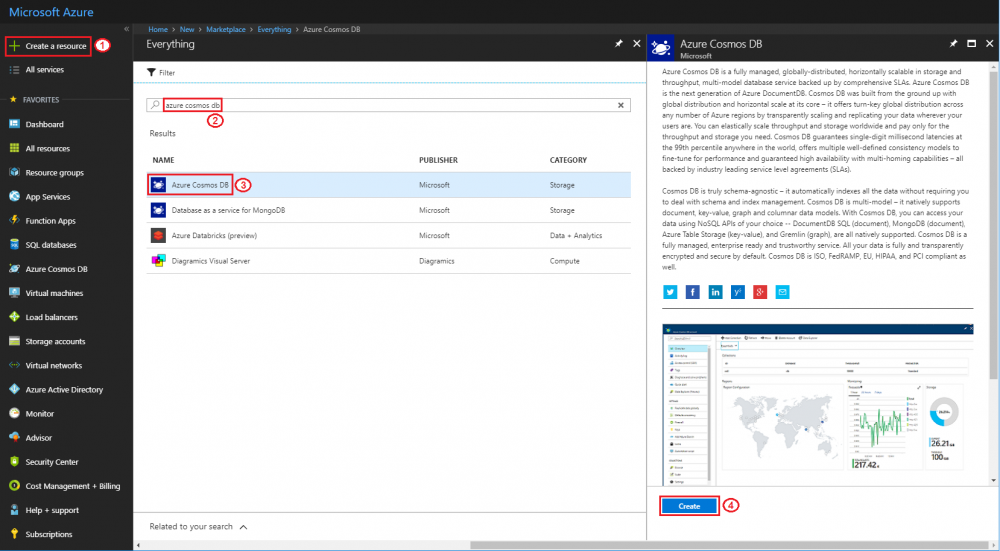

*Search "azure cosmos db" and create new one | *Search "azure cosmos db" and create new one | ||

| − | [[File: | + | [[File:Azure-cosmos-new.png|center|1000px|create cosmos DB]] |

*ID: '''azurestart-cosmos''' | *ID: '''azurestart-cosmos''' | ||

| Line 21: | Line 21: | ||

*Resource Group: click '''"Use existing"''' and select '''"azurestart-rg"''' | *Resource Group: click '''"Use existing"''' and select '''"azurestart-rg"''' | ||

| − | [[File: | + | [[File:Azure-cosmos-detail.png|center|450px|cosmos DB settings]] |

Step 2: Create an Azure Blob Storage | Step 2: Create an Azure Blob Storage | ||

| Line 27: | Line 27: | ||

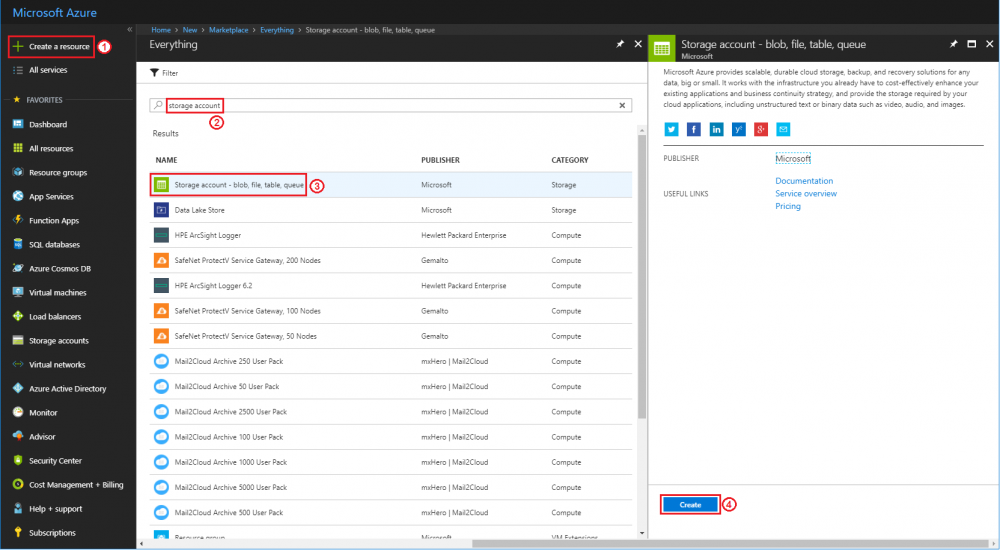

*Search “storage account” and create new one | *Search “storage account” and create new one | ||

| − | [[File: | + | [[File:Azure-blob-new.png|center|1000px|create blob storage]] |

*Name: '''azurestartblob''' | *Name: '''azurestartblob''' | ||

| Line 33: | Line 33: | ||

*Resource Group: click '''"Use existing"''' and select '''"azurestart-rg"''' | *Resource Group: click '''"Use existing"''' and select '''"azurestart-rg"''' | ||

| − | [[File: | + | [[File:Azure-blob-detail.png|center|450px|blob storage settings]] |

== Connect Azure IoT hub to Azure storage == | == Connect Azure IoT hub to Azure storage == | ||

| Line 39: | Line 39: | ||

Step 1: Login to Azure portal and create a Stream Analytics job | Step 1: Login to Azure portal and create a Stream Analytics job | ||

| − | * Search "stream analytics" and create new one | + | *Search "stream analytics" and create new one |

| − | [[File: | + | [[File:Azure-sa-new.png|center|1000px|create sa]] |

| − | * Job name: '''azurestart-sa''' (for example) | + | *Job name: '''azurestart-sa''' (for example) |

| − | * Resource Group: click '''"Use existing"''' and select '''"azurestart-rg"''' | + | *Resource Group: click '''"Use existing"''' and select '''"azurestart-rg"''' |

| − | * Hosting environment: '''Cloud''' | + | *Hosting environment: '''Cloud''' |

| − | [[File: | + | [[File:Azure-sa-detail.png|center|450px|sa settings]] |

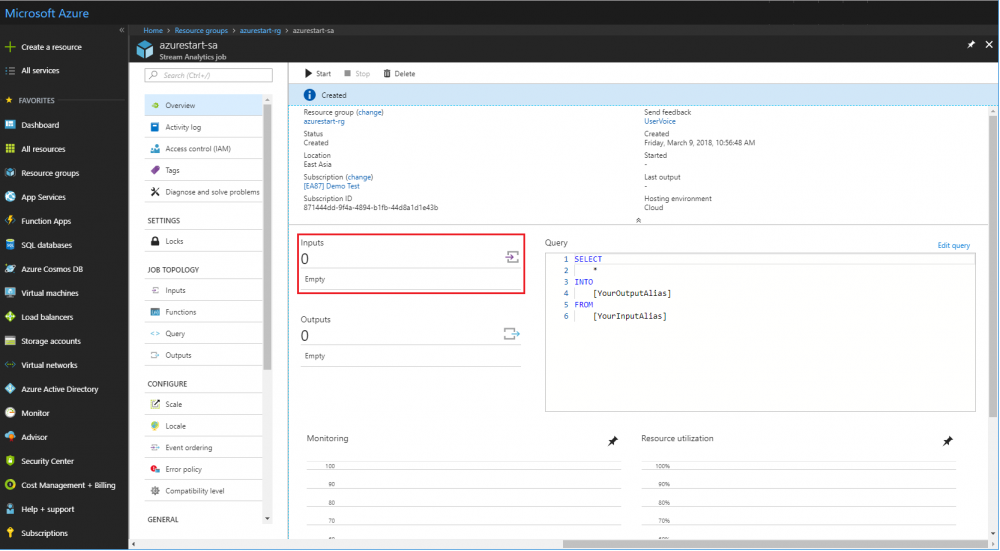

Step 2: Set input of stream analytics job | Step 2: Set input of stream analytics job | ||

| − | * Open "azurestart-sa" created at last step: '''Resource groups''' → '''azurestart-rg''' → '''azurestart-sa''' | + | *Open "azurestart-sa" created at last step: '''Resource groups''' → '''azurestart-rg''' → '''azurestart-sa''' |

| + | |||

| + | [[File:Azure-sa.png|center|1000px|open sa]] | ||

| + | |||

| + | *Click '''"Inputs"''' | ||

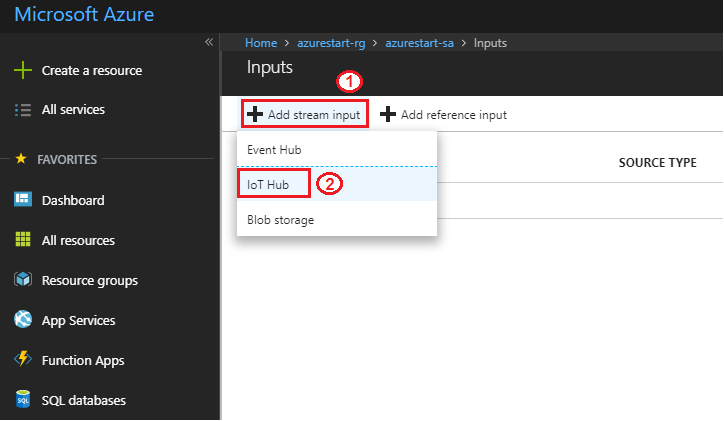

| − | [[File: | + | [[File:Azure-sa-input.png|center|1000px|open sa input]] |

| − | * | + | *'''"Add stream input"''' → '''"IoT Hub"''' |

| − | [[File: | + | [[File:Azure-sa-input-add-iothub.png|center|1000px|sa add input: iot hub]] |

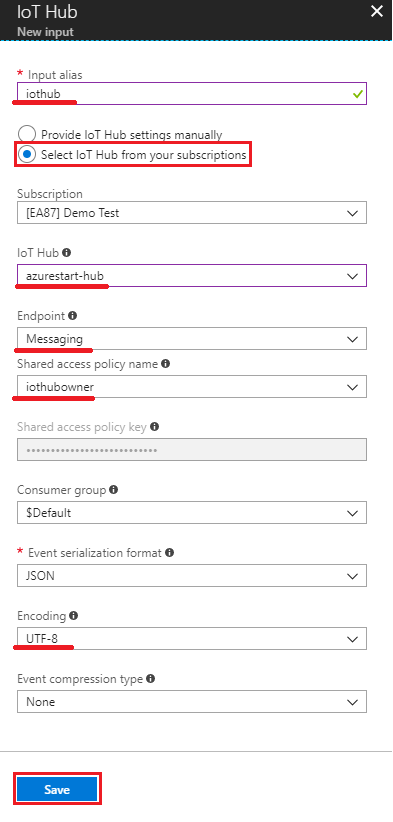

| − | * ''' | + | * Input alias: '''iothub''' |

| + | * Choose '''"Select IoT Hub from your subscriptions"''' | ||

| + | * IoT hub: '''azurestart-hub''' | ||

| + | * Endpoint: '''Messaging''' | ||

| + | * Shared access policy name: '''iothubowner''' | ||

| + | * Encoding: '''UTF-8''' | ||

| − | [[File:azure-sa-input-add-iothub.png|center| | + | [[File:azure-sa-input-add-iothub-detail.png|center|450px|sa input detail: iot hub]] |

= AWS = | = AWS = | ||

Revision as of 17:59, 11 March 2018

Contents

Azure

In this section, we will introduce steps to insert data from an Azure IoT hub to different Azure data stores. We assume that device messages are already received by an Azure IoT hub. If not, you can refer to Protocol converter with Node-Red section, which is designed to ingest IoT data from edge devices to Azure IoT hubs.

When an Azure IoT hub receives messages, we use Azure stream analytics job to dispatch the received data to other Azure services. In this case, we will ingest data into Azure CosmosDB and Azure blob storage.

The IoT hub we're using to receive messages is named "azurestart-hub". All Azure resources used in this section are stored in single Azure resource group named "azurestart-rg".

Create storage to save ingested IoT data

Step 1: Login to Azure portal and create an Azure CosmosDB

- Search "azure cosmos db" and create new one

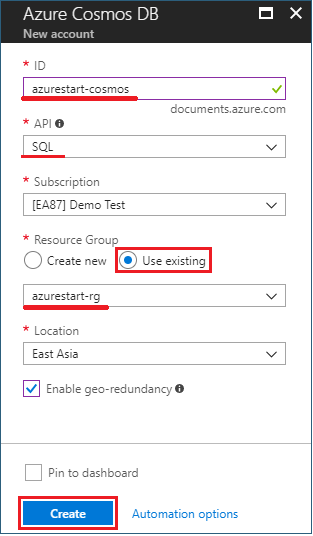

- ID: azurestart-cosmos

- API: select "SQL"

- Resource Group: click "Use existing" and select "azurestart-rg"

Step 2: Create an Azure Blob Storage

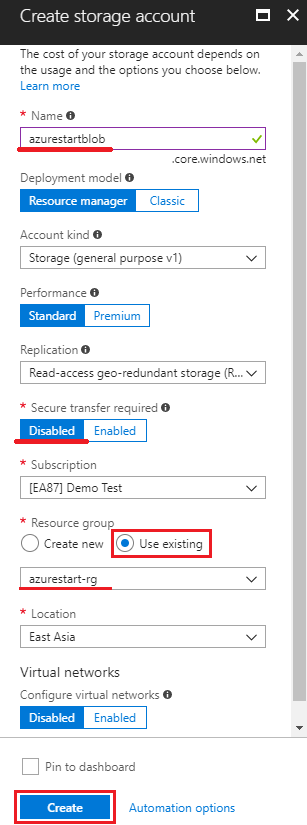

- Search “storage account” and create new one

- Name: azurestartblob

- Secure transfer required: Disabled

- Resource Group: click "Use existing" and select "azurestart-rg"

Connect Azure IoT hub to Azure storage

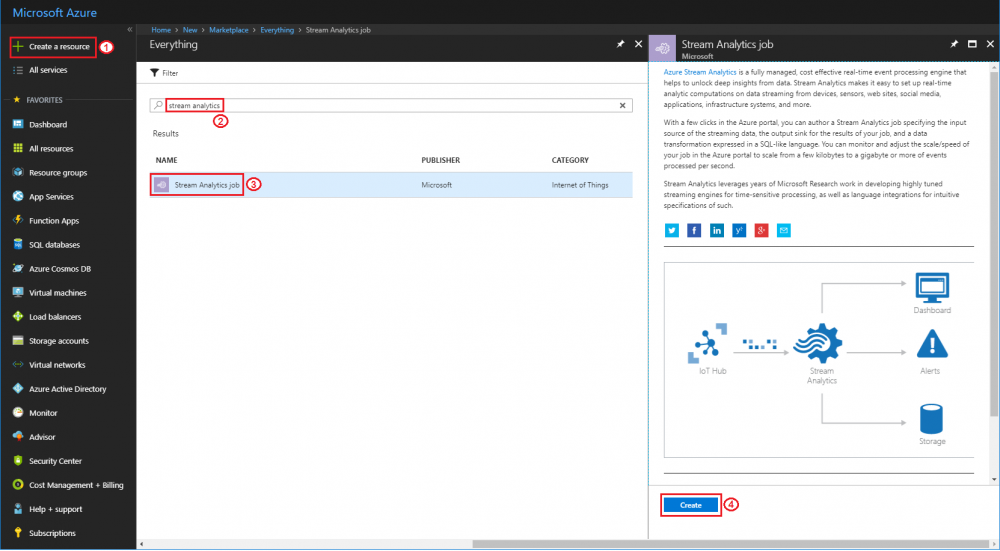

Step 1: Login to Azure portal and create a Stream Analytics job

- Search "stream analytics" and create new one

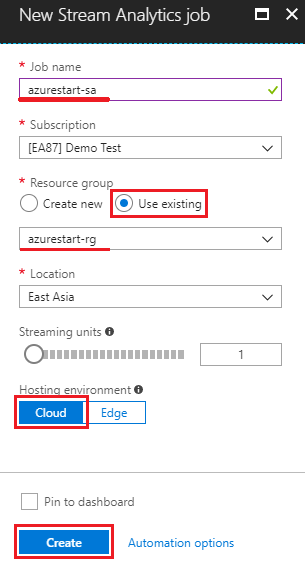

- Job name: azurestart-sa (for example)

- Resource Group: click "Use existing" and select "azurestart-rg"

- Hosting environment: Cloud

Step 2: Set input of stream analytics job

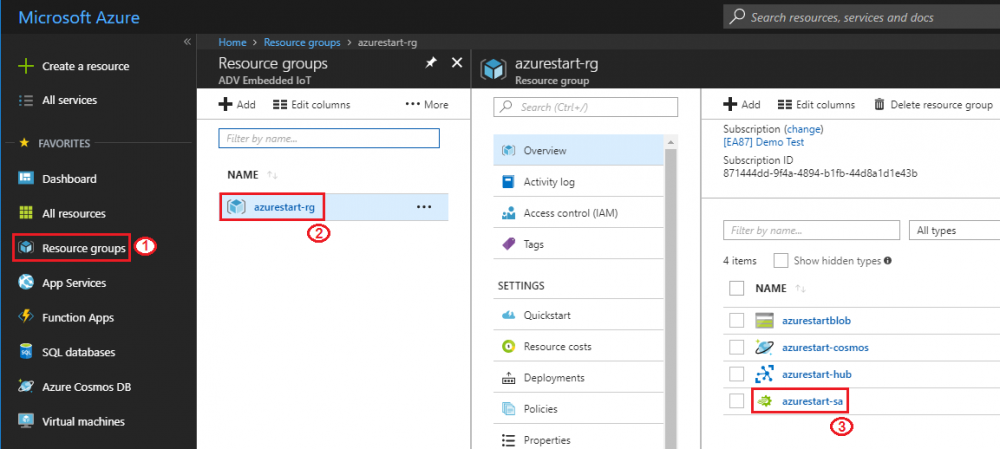

- Open "azurestart-sa" created at last step: Resource groups → azurestart-rg → azurestart-sa

- Click "Inputs"

- "Add stream input" → "IoT Hub"

- Input alias: iothub

- Choose "Select IoT Hub from your subscriptions"

- IoT hub: azurestart-hub

- Endpoint: Messaging

- Shared access policy name: iothubowner

- Encoding: UTF-8

AWS

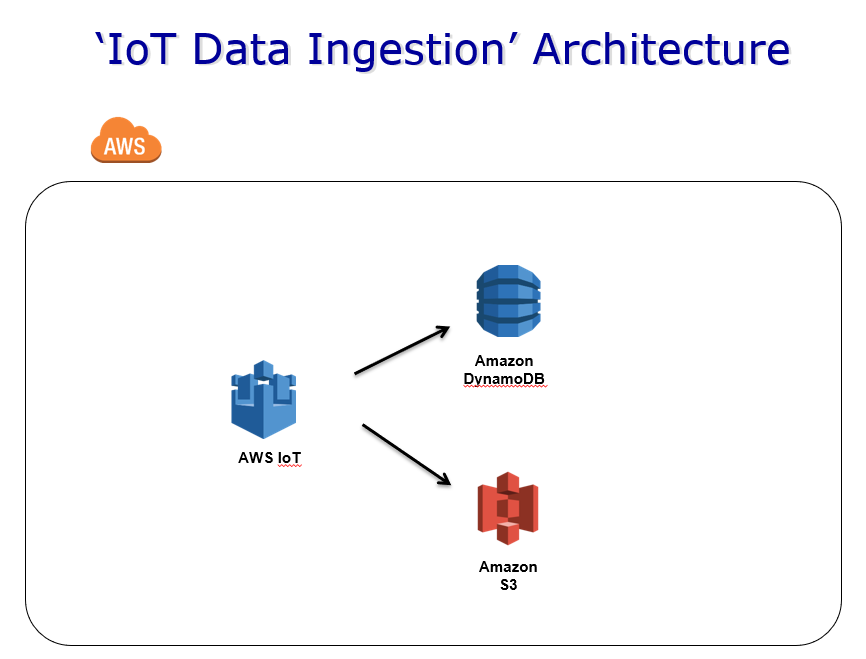

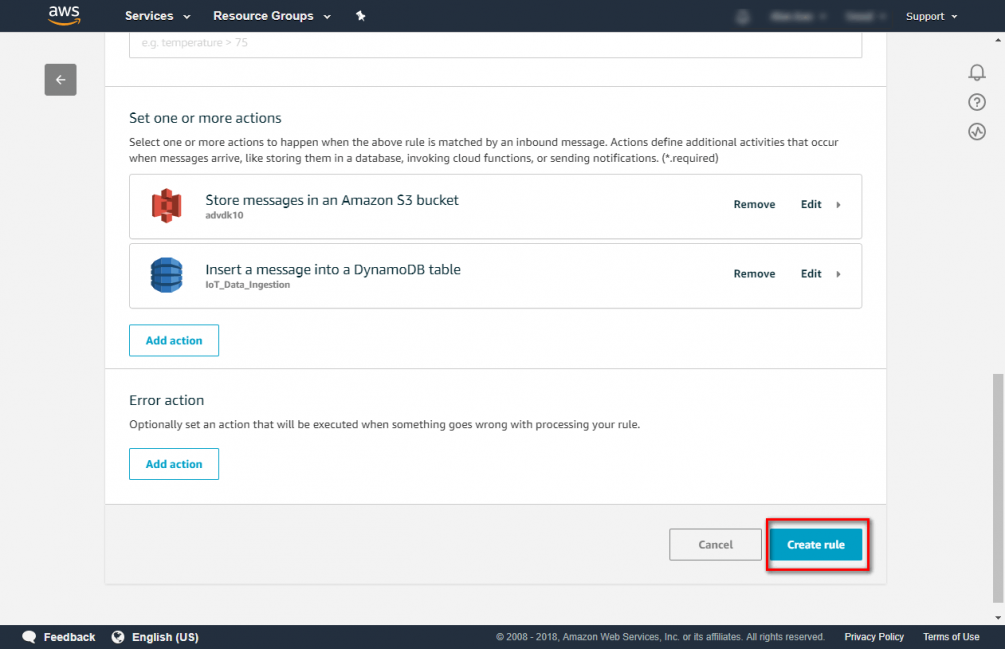

In this section, you can get experience about AWS IoT rule engine to insert data to different AWS storage. You can refer to Protocol converter with Node-Red which is designed to ingest IoT data to AWS IoT.

When you receive IoT data from AWS IoT. You can use rule engine to connect to another AWS service. In this case we will send IoT data to AWS S3 and DynamoDB

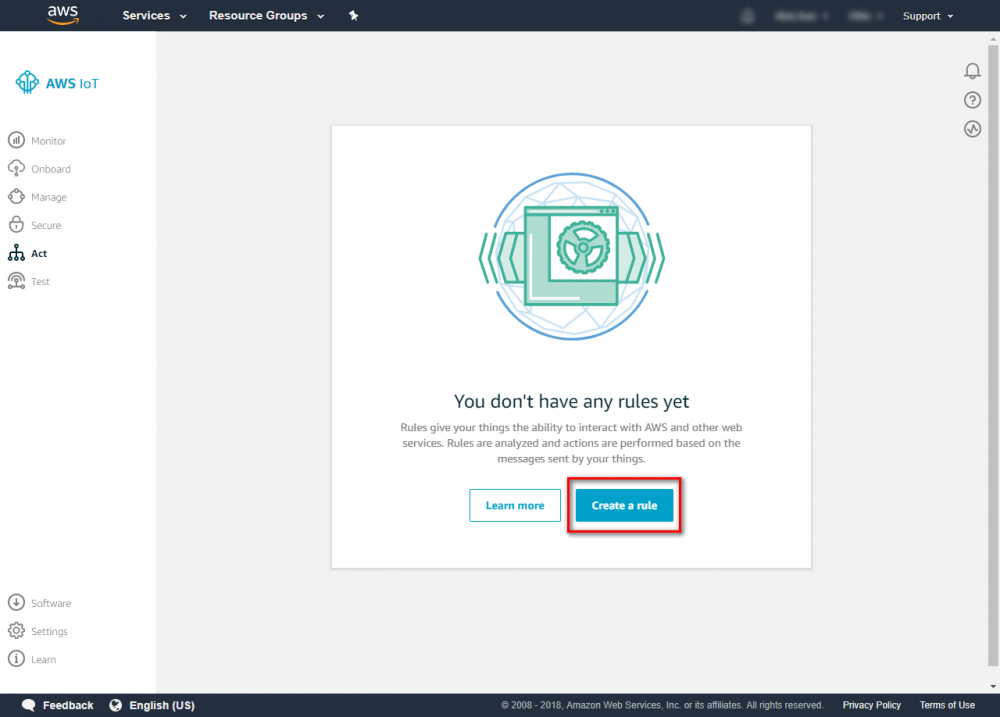

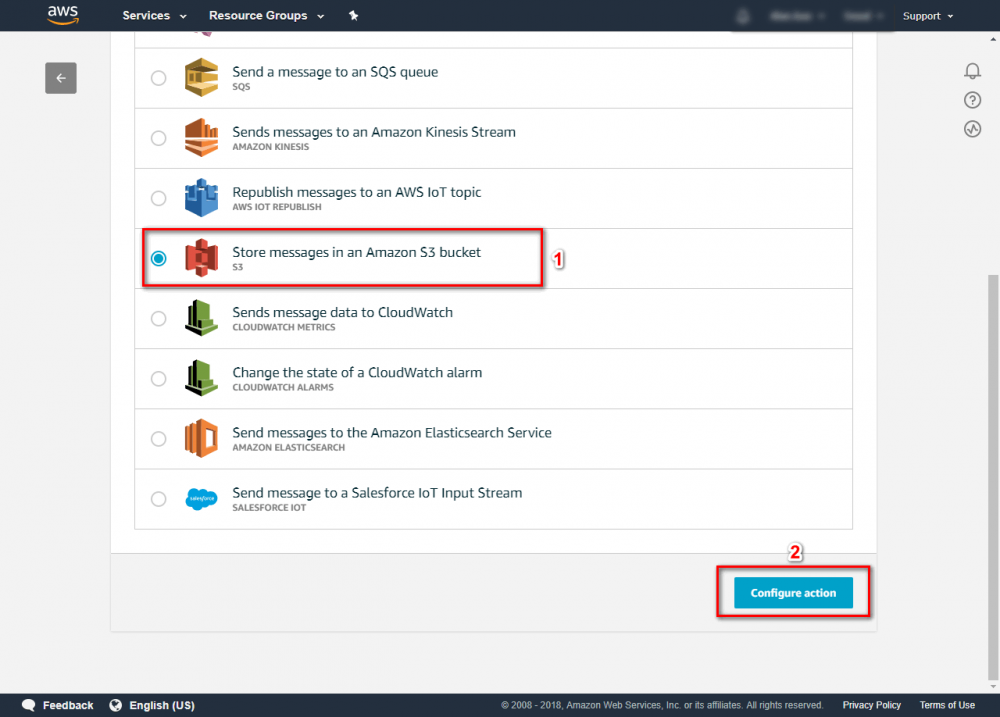

Step 1. Go to the AWS IoT console and click Act and click create rule.

Step 2. Enter {your rule name} and {description}

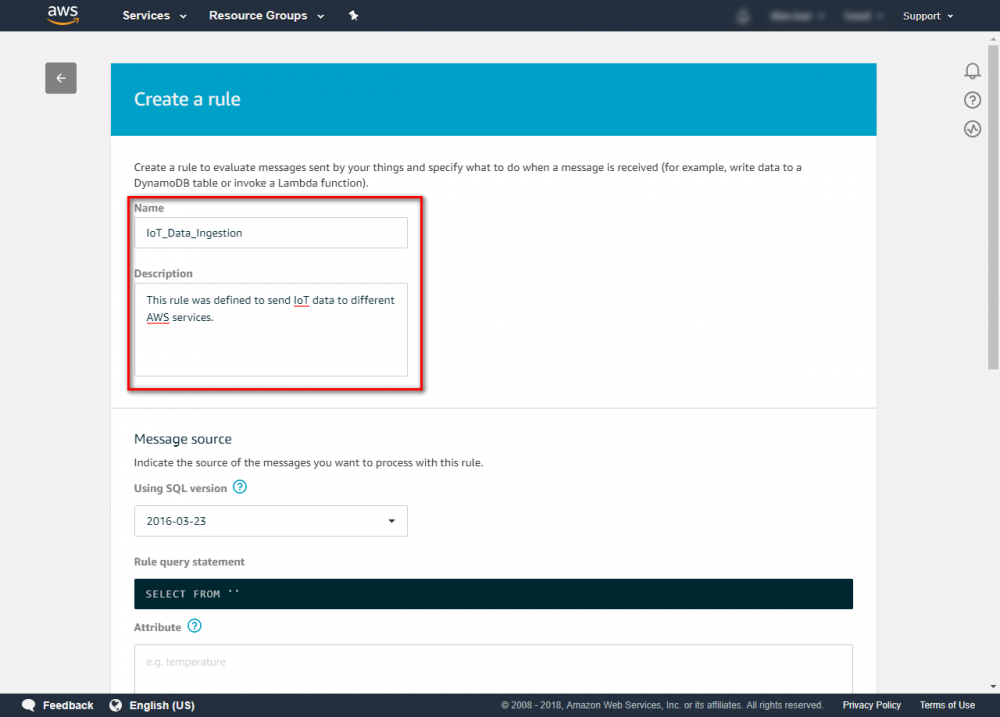

Step 3. Configure the rule as follows: Attribute : * Topic Filter: {your AWS IoT publish Topic}. The topic which used in protocol converter is “protocol-conn/{Device Name}/{Handler Name}”. In this case, we use wildcard # to get all message More Topic information can be found at

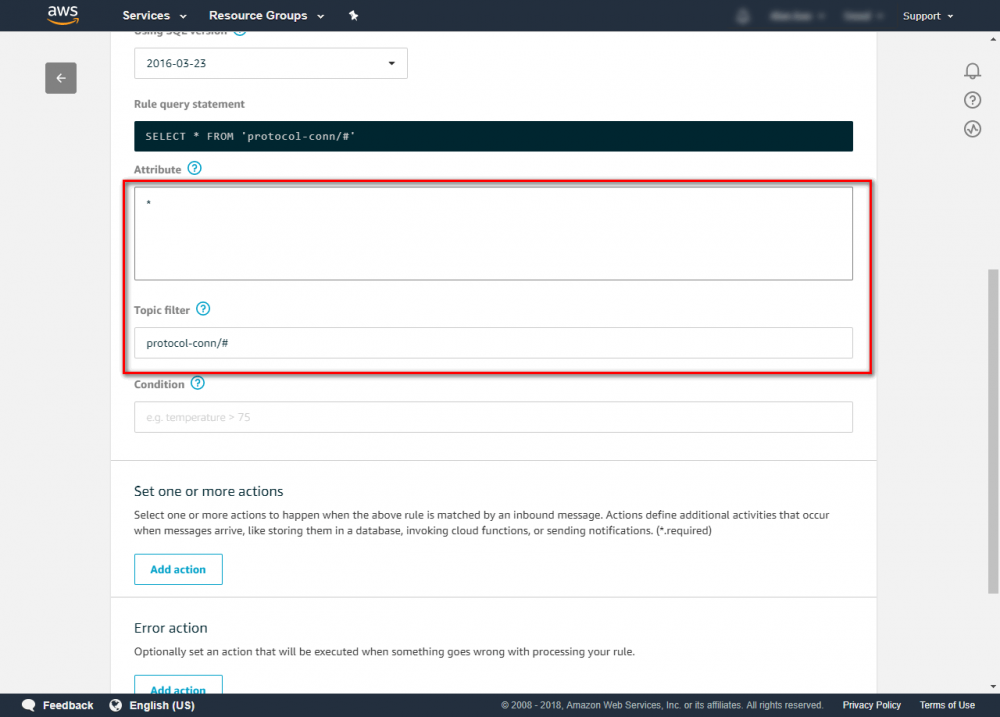

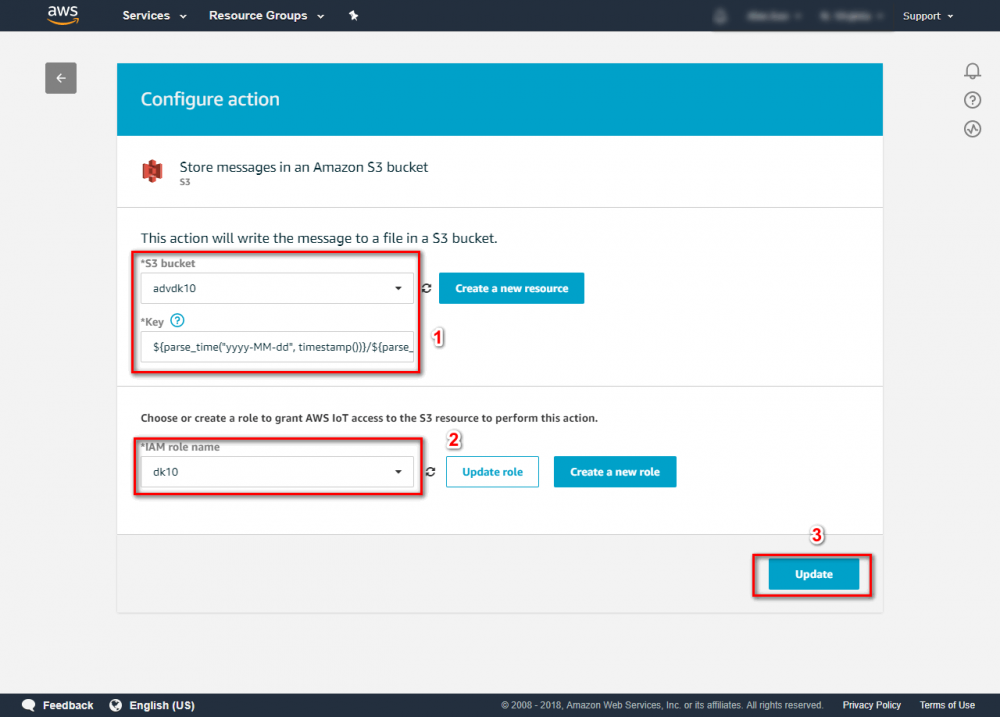

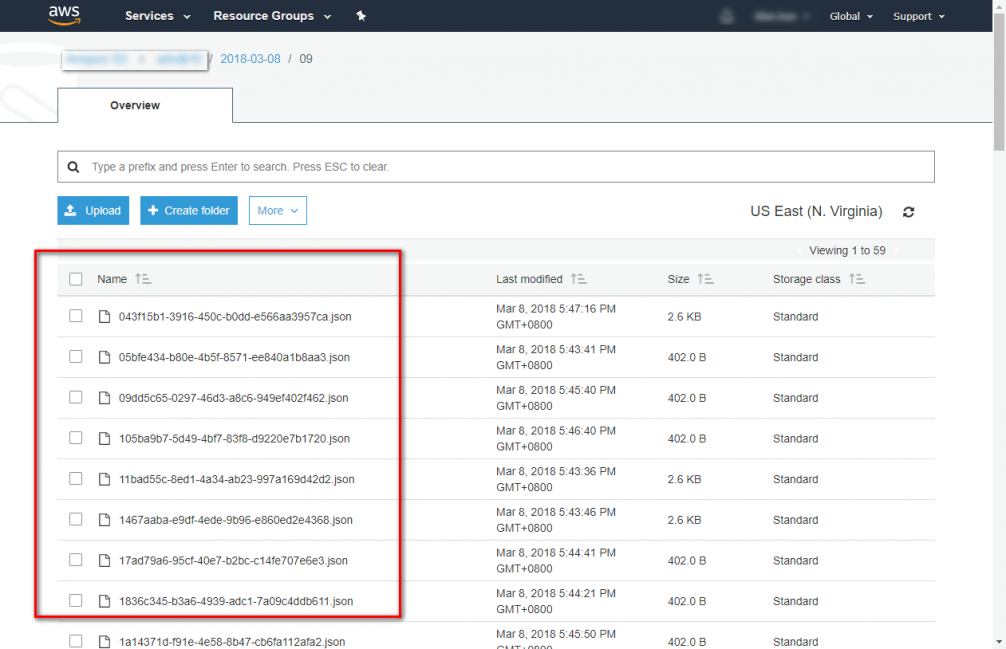

Step 4. Click Add action to store message in S3 bucket. Select “Store message in an Amazon S3 bucket”→click “configure action” more rule engine information can be found atStep 5. In configure action. You need to choose a S3 bucket. If you don’t have any one, you can click “Create a new resource” to create one. In this case, we store data to json format and assort by data and hour. You can use SQL wildcard parse_time () and timestamp() to assort store folder and using newuuid() as filename.

${parse_time("yyyy-MM-dd", timestamp())}/${parse_time("HH", timestamp())}/${newuuid()}.json more AWS IoT SQL Reference information can be fount at

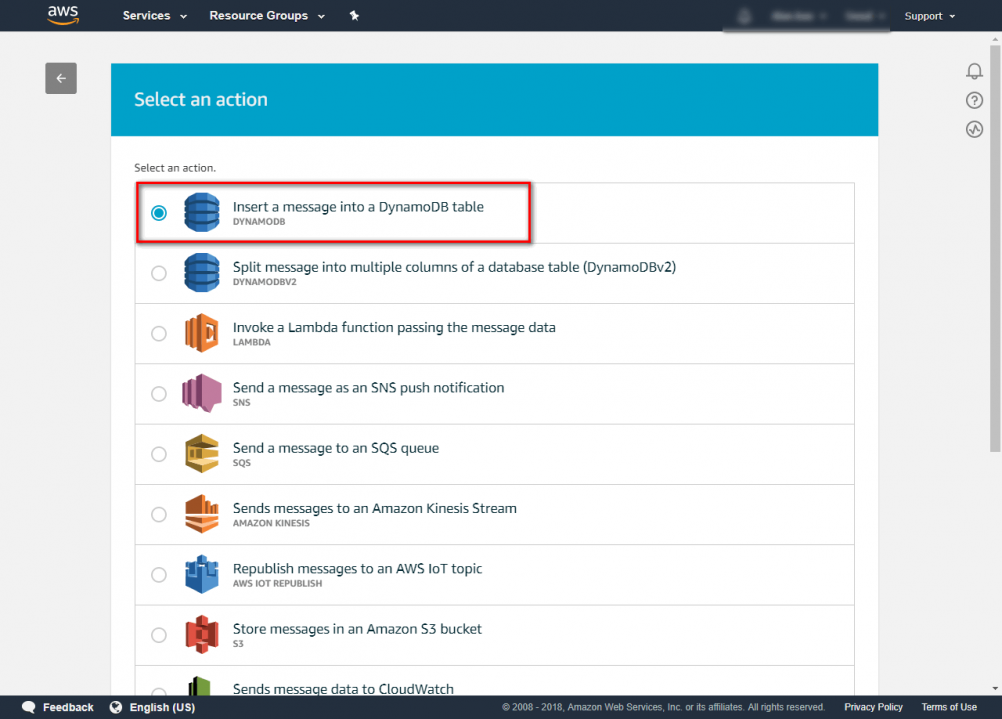

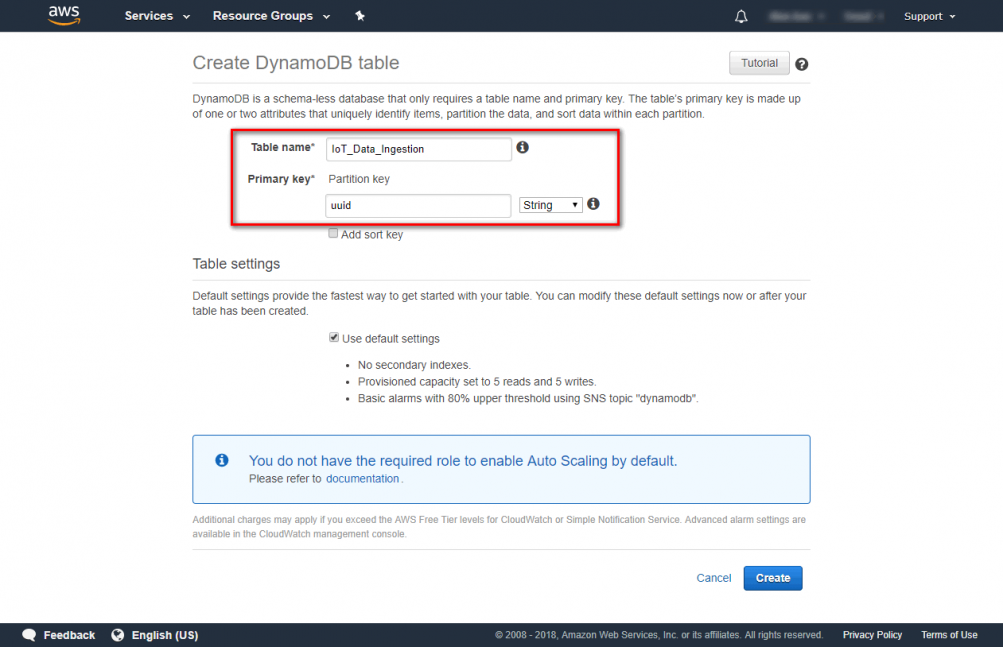

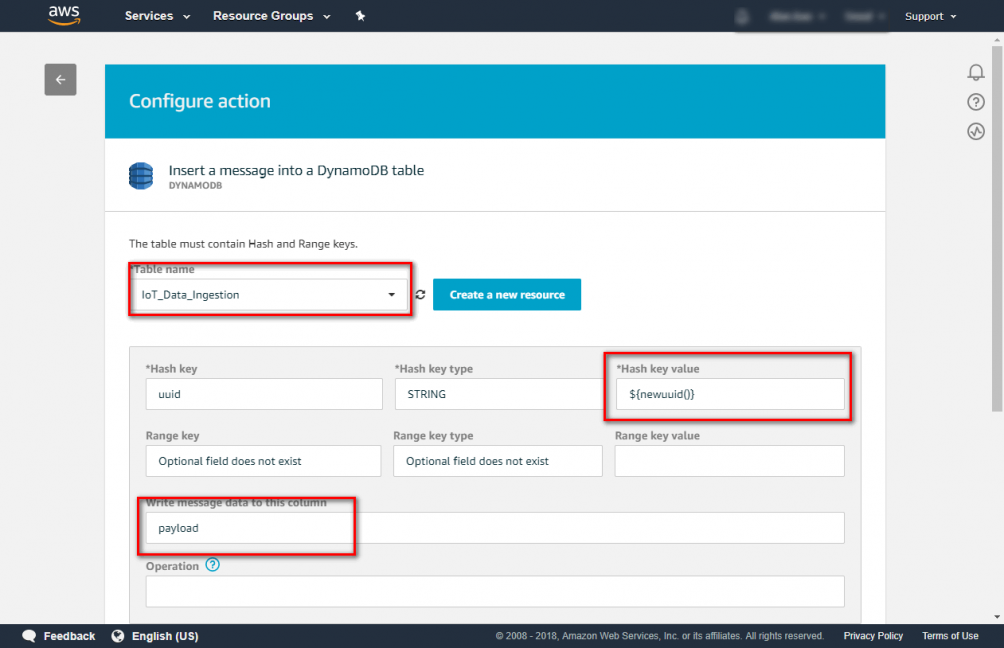

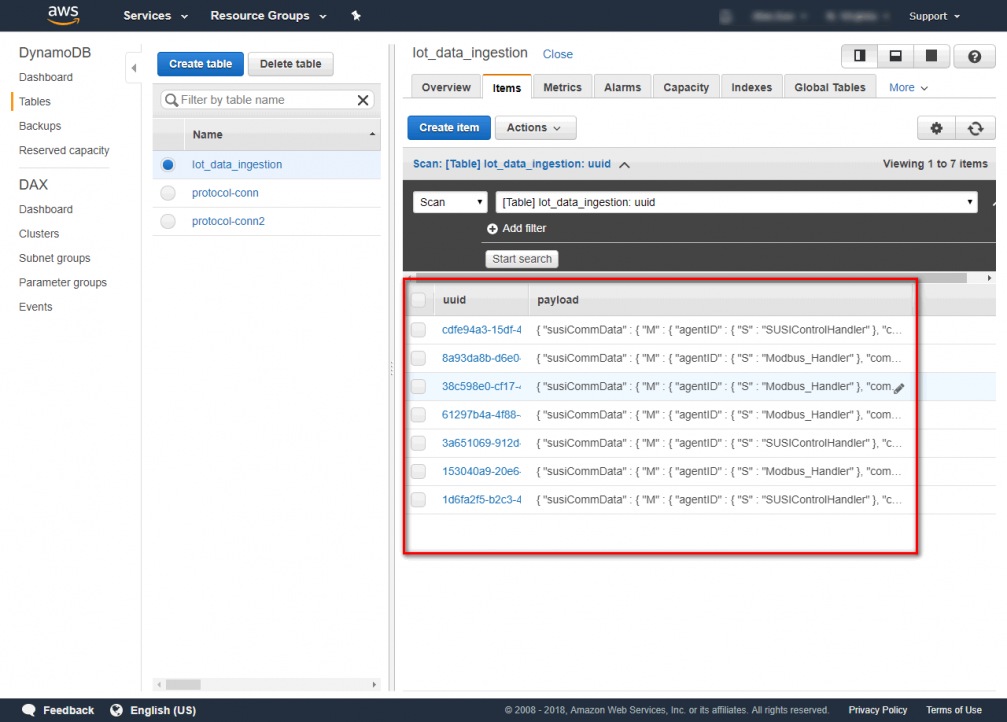

you need to choose a role which has permission can access AWS S3. After setting click update. Step 6. Click “add action” to connect DynamoDB Step 7. Select “Insert a message into a DynamoDB Table”→click “configure action” Step 8. Choose a DynamoDB table. If don't have DynamoDB table, you can click “Create a new resource” to create a table. In this case. We create a DynamoDB table which called ”IoT_Data_Ingestion” and it’s primary key is “uuid”. Step 9. Choose “IoT_Data_Ingestion” and enter Hash key value and Write message data to this column. Hash key value ${newuuid()} Write message data to this column payload you need to choose a role which has permission can access AWS DynamoDB. After setting click update. Step 10. After finishing setting click “create rule” Step 11. Now you can publish your message and check S3 and DynamoDB.